Data Compression is one of the exciting features available in the SQL Server 2008 release. It’s only available on the Enterprise and Developer Edition. Unlike backup compression, you can control it by setting in the SQL instance level. Data Compression requires individual handling on table, index, indexed view and partition.

Where to apply SQL Server data compression

Data compression only works for Enterprise Edition and Developer Edition and can be applied to the following objects:

- Heap Table

- Clustered index

- Non-clustered index

- Indexed view

- Partitioned table and partitioned index

Data compression is not enabled by default and can’t be controlled at the instance level or database level. You will need to enable it individually for each object. It can be enabled at the time when you create the object by using CREATE TABLE or CREATE INDEX statement or enabled later by issuing ALTER TABLE or ALTER INDEX. Below are the sample statements for each statement.

CREATE TABLE dbo.T1 (c1 int, c2 nvarchar(200) )WITH (DATA_COMPRESSION = ROW);

CREATE NONCLUSTERED INDEX IX_INDEX_1 ON T1 (C2)WITH ( DATA_COMPRESSION = ROW ) ;

ALTER TABLE T1 REBUILD WITH (DATA_COMPRESSION = PAGE);

ALTER INDEX IX_INDEX1 ON T1REBUILD WITH ( DATA_COMPRESSION = PAGE )

Inheritance of data compression

A non-clustered Index doesn’t automatically become compressed just because you enable compression on the table level (heap or clustered index). The same with table and index partition; one partition can be compressed while the others remained uncompressed.

But if you create a clustered index on a compressed heap table, then the clustered index WILL inherit the compression setting on original heap table.

When data compression won’t work

If the maximum row size plus the compression overhead exceeds the maximum row size of 8060 bytes, you will get this error message when trying to compress the table:

Msg 1701, Level 16, State 1, Line 1

Creating or altering table ‘table_name’ failed because the minimum row size would be 8073, including 18 bytes of internal overhead. This exceeds the maximum allowable table row size of 8060 bytes.

As of now data compression doesn’t work with XML, BLOB and MAX data types.

Calculating space savings

Before implementing data compression on a database, we should know how much space we can save with compression. Here I have developed the code to collect space saving estimation for the entire database.

Replace db_name with the database name in which you want to evaluate the space saving through Data Compression. As there are two options for data compression, you can either set @compress_option to be “Page” or “Row,” and compare the saving result. I also included error handling in case any of the tables have row size that exceeds 8060 bytes after adding compression overhead.

use db_namegodeclare @compress_option varchar(10)set @compress_option = 'Page'--set @compress_option = 'Row'SET NOCOUNT ON if OBJECT_ID('tempdb..#index') is not nulldrop table #indexselect c.name as sch_name, b.name obj_name, a.name as index_name, a.indid,dpages, convert(numeric(20,2),round(dpages*8/1024.,2)) as dpages_MB, reserved, convert(numeric(20,2),round(reserved*8/1024.,2)) as reserved_MB, rowcnt into #index from sys.sysindexes a inner join sysobjects b on a.id = b.idinner join sys.schemas c on b.uid = c.schema_idwhere b.type = 'U' and (a.name not like '_WA_Sys%' or a.name is null)and convert(numeric(20,2),round(dpages*8/1024.,2)) > = 0 order by dpagesIF OBJECT_ID('tempdb..#compression_error') is not nulldrop table #compression_errorCREATE TABLE #compression_error (error_desc varchar(2000), err_number int, err_severity int, err_state int, err_procedure varchar(126), err_line int, err_message varchar(2048))IF OBJECT_ID('tempdb..#datacompress') is not nulldrop table #datacompresscreate table #datacompress(object_name sysname,schema_name sysname,index_id int,partition_number int,size_with_current_compression_setting_KB bigint,size_with_requested_compression_setting_KB bigint,sample_size_with_current_compression_setting_KB bigint,sample_size_with_requested_compression_setting_KB bigint)--select * from #datacompressDECLARE @compress_sql varchar(8000)SET NOCOUNT ON DECLARE compress_cursor CURSOR FOR SELECT 'BEGIN TRYINSERT INTO #datacompress EXEC sp_estimate_data_compression_savings @schema_name = '''+c.name+''', @object_name = '''+b.name+''', @index_id = '+convert(varchar(10),a.indid)+', @partition_number = NULL, @data_compression = '''+@compress_option+''' ; END TRYBEGIN CATCHINSERT INTO #compression_error SELECT ''['+c.name+'].['+b.name+'] failed in compression evaluation because the minimum row size plus internal overhead exceeds the maximum allowable table row size of 8060 bytes.'' AS Error_Desc ,ERROR_NUMBER() AS ErrorNumber ,ERROR_SEVERITY() AS ErrorSeverity ,ERROR_STATE() AS ErrorState ,ERROR_PROCEDURE() AS ErrorProcedure ,ERROR_LINE() AS ErrorLine ,ERROR_MESSAGE() AS ErrorMessage;END CATCH;'from sys.sysindexes a inner join sysobjects b on a.id = b.idinner join sys.schemas c on b.uid = c.schema_idwhere b.type = 'U' and (a.name not like '_WA_Sys%' or a.name is null)and convert(numeric(20,2),round(dpages*8/1024.,2)) > = 0 order by dpagesOPEN compress_cursor;FETCH NEXT FROM compress_cursor INTO @compress_sql;WHILE @@FETCH_STATUS = 0BEGIN exec (@compress_sql)FETCH NEXT FROM compress_cursor INTO @compress_sql;ENDCLOSE compress_cursor;DEALLOCATE compress_cursor;SET NOCOUNT OFF

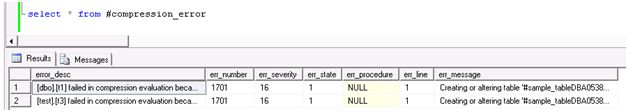

Tables that can’t be compressed

By executing the above script, you can collect the compression rate for all the objects within the specified database. If there is any table that can’t be compressed due to the row size, you can review the not compressable list of tables by querying temporary table #compression_error from the query session where you execute above script. Here are the sample result set of #compression_error. The table contains information like table name and the exact result why the table can’t be compressed.

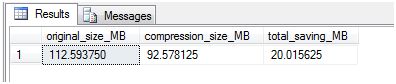

Total saving through data compression

Certainly we want to know much we are saving by implementing data compression, either through PAGE compression or ROW compression. Here is a quick way to summarize the total saving in MB. Execute below statement in the same query session.

SELECT sum(size_with_current_compression_setting_KB)/1024. AS original_size_MB,sum(size_with_requested_compression_setting_KB)/1024. AS compression_size_MB,sum(size_with_current_compression_setting_KB-size_with_requested_compression_setting_KB)/1024. AS total_saving_MB FROM #datacompress

Here is the result set:

Saving estimation Summary

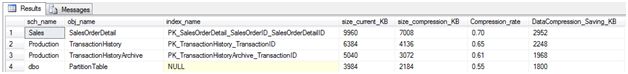

We can also take a more gradual approach in applying data compression. Instead of applying for all objects within the database, you can review how much saving we can get for each object/table/schema. And maybe target the historical table that’s being used rarely but occupying most space. The below query provides breakdown on each object and index and how much space it can save by data compression (under the same query session).

SELECT a.obj_name, a.index_name, size_with_current_compression_setting_KB AS size_current_KB, size_with_requested_compression_setting_KB AS size_compression_KB, Compression_rate = convert(numeric(20,2),round(size_with_requested_compression_setting_KB*1.0/size_with_current_compression_setting_KB,2)) ,size_with_current_compression_setting_KB -size_with_requested_compression_setting_KB AS DataCompression_Saving_KBFROM #index a inner join #datacompress b ON a.obj_name = b.object_name and a.indid = b.index_idWHERE convert(numeric(20,2),round(size_with_requested_compression_setting_KB*1.0/size_with_current_compression_setting_KB,2)) <= 1and size_with_current_compression_setting_KB > 0and convert(numeric(20,2),round(size_with_requested_compression_setting_KB*1.0/size_with_current_compression_setting_KB,2)) < 1ORDER BY size_with_current_compression_setting_KB -size_with_requested_compression_setting_KB DESC

Sample result:

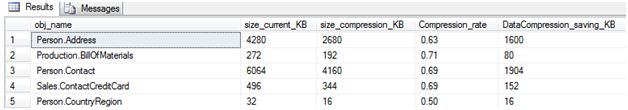

We can also rollup the summary on table level by below query (under the same query session).

SELECT sch_name+'.'+ obj_name as obj_name, sum(size_current_KB) AS size_current_KB, sum(size_compression_KB) AS size_compression_KB, convert(numeric(20,2),round(sum(size_compression_KB)*1.0/sum(size_current_KB)*1.0,2)) AS Compression_rate , sum(size_current_KB) - sum(size_compression_KB)AS DataCompression_saving_KBFROM (select a.sch_name, a.obj_name, a.index_name, size_with_current_compression_setting_KB AS size_current_KB, size_with_requested_compression_setting_KB AS size_compression_KB, Compression_rate = convert(numeric(20,2),round(size_with_requested_compression_setting_KB*1.0/size_with_current_compression_setting_KB,2)) ,size_with_current_compression_setting_KB -size_with_requested_compression_setting_KB AS DataCompression_Saving_KBFROM #index a inner join #datacompress b ON a.obj_name = b.object_name and a.indid = b.index_idWHERE convert(numeric(20,2),round(size_with_requested_compression_setting_KB*1.0/size_with_current_compression_setting_KB,2)) <= 1and size_with_current_compression_setting_KB > 0and convert(numeric(20,2),round(size_with_requested_compression_setting_KB*1.0/size_with_current_compression_setting_KB,2)) < 1)aGROUP BY sch_name, obj_name

Sample result:

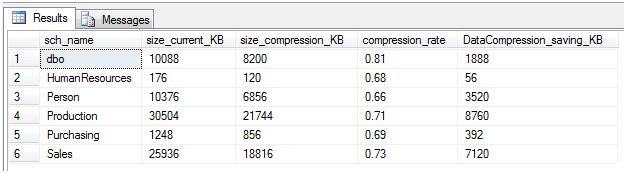

Some database is designed with heavy usage of schema, we can check out the space saving by aggregating to schema level. Below query does the trick (under the same query session).

SELECT sch_name, sum(size_current_KB) AS size_current_KB, sum(size_compression_KB) AS size_compression_KB, convert(numeric(20,2),round(sum(size_compression_KB)*1.0/sum(size_current_KB)*1.0,2)) AS compression_rate, sum(size_current_KB) - sum(size_compression_KB) AS DataCompression_saving_KBFROM (SELECT a.sch_name, a.obj_name, a.index_name, size_with_current_compression_setting_KB as size_current_KB, size_with_requested_compression_setting_KB as size_compression_KB, Compression_rate = convert(numeric(20,2),round(size_with_requested_compression_setting_KB*1.0/size_with_current_compression_setting_KB,2)) ,size_with_current_compression_setting_KB -size_with_requested_compression_setting_KB as DataCompression_Saving_KBFROM #index a inner join #datacompress b on a.obj_name = b.object_name and a.indid = b.index_idWHERE convert(numeric(20,2),round(size_with_requested_compression_setting_KB*1.0/size_with_current_compression_setting_KB,2)) <= 1and size_with_current_compression_setting_KB > 0and convert(numeric(20,2),round(size_with_requested_compression_setting_KB*1.0/size_with_current_compression_setting_KB,2)) < 1)agroup by sch_name

Sample result:

Implementing SQL Server Data Compression

Index compression

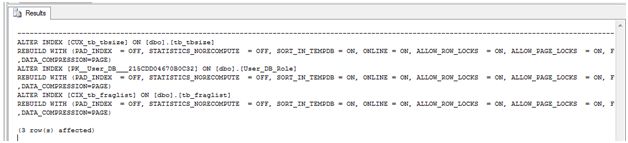

Until now, we are trying to see what we can benefit from implementing data compression. It’s time to get onto the step of generating script to implement data compression on table and index.

Execute below T-SQL statement in the same query session. Replace option PAGE with ROW if you opt for ROW compression (under the same query session).

DECLARE @SORT_IN_TEMPDB varchar(5) = 'ON'DECLARE @ONLINE varchar(5) = 'ON'DECLARE @Compression varchar(10) = 'PAGE'--/Row'--Add use database and goSELECT 'ALTER INDEX ['+index_name+'] ON [dbo].['+obj_name+'] REBUILD WITH (PAD_INDEX = OFF, STATISTICS_NORECOMPUTE = OFF, SORT_IN_TEMPDB = '+@SORT_IN_TEMPDB+', ONLINE = '+@ONLINE+', ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON, FILLFACTOR = 90,DATA_COMPRESSION='+@Compression+')'FROM #index a inner join #datacompress b on a.obj_name = b.object_name and a.indid = b.index_idWHEREconvert(numeric(20,2),round(size_with_requested_compression_setting_KB*1.0/size_with_current_compression_setting_KB,2)) <= 1and a.index_name IS NOT NULLAND size_with_current_compression_setting_KB > 0 AND convert(numeric(20,2),round(size_with_requested_compression_setting_KB*1.0/size_with_current_compression_setting_KB,2)) < 1ORDER BY size_with_current_compression_setting_KB

By running the above query, it will produce data compression script for the index:

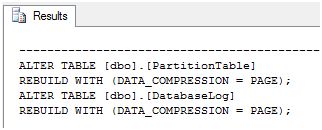

Table compression

For table compression, you can run below query to produce the SQL statement for compression on user table. Replace PAGE with ROW if choose to do ROW level compression (under the same query session).

DECLARE @SORT_IN_TEMPDB varchar(5) = 'ON'DECLARE @ONLINE varchar(5) = 'ON'DECLARE @Compression varchar(10) = 'PAGE'--/Row'SELECT 'ALTER TABLE [dbo].['+obj_name+'] REBUILD WITH (DATA_COMPRESSION = '+@Compression+');'FROM #index a inner join #datacompress b on a.obj_name = b.object_name and a.indid = b.index_idWHERE convert(numeric(20,2),round(size_with_requested_compression_setting_KB*1.0/size_with_current_compression_setting_KB,2)) <= 1AND a.index_name IS NULLAND size_with_current_compression_setting_KB > 0 AND convert(numeric(20,2),round(size_with_requested_compression_setting_KB*1.0/size_with_current_compression_setting_KB,2)) < 1ORDER BY size_with_current_compression_setting_KB

Sample T-SQL for Page Compression on user table:

Conclusion

With many companies looking for ways to consolidate hardware resources and reduce cost of operation, data compression is certainly a way to achieve the goal quickly and effectively. You would want to keep a benchmark and compare the performance before and after and properly test it before deploying onto production.