A poorly optimized query can become the bottleneck in an otherwise highly optimized process. In many cases, the source of the slow performance is improper table joining in the WHERE clause. With that in mind, this article looks at ways to optimize table joins for speedy data retrieval.

Novice query writers are

quite content once they are satisfied that their query is retrieving the

correct data. Beyond that, there comes the inevitable realization that it

takes an unacceptable length of time for the query to come back with those

results. In fact, a poorly optimized query can become the bottleneck in an

otherwise highly optimized process. In many cases, the source of the slow

performance is improper table joining in the WHERE clause. With that in mind,

let’s take a look at ways to optimize our table joins for speedy data

retrieval.

How MySQL Resolves Table Joins

We’ve already seen in the Clearing

a Path through the 3NF Join Jungle article that normalized databases can

require a lot of joins to get at the desired data, lots and lots of joins! As

you can imagine, the mechanism through which the database engine links the

tables can greatly affect retrieval speed. Let’s take a look at a simple three

table query and see how MySQL scans them.

select a.au_lname,

a.au_fname,

ta.royaltyper,

t.title,

t.royalty

from authors a,

titleauthor ta,

titles t

where a.au_id = ta.au_id

and ta.title_id = t.title_id;

The following quote from the

MySQL documentation explains the single-sweep/nested-loop join method that it

employs.

MySQL resolves all joins using a

[single sweep] nested-loop join method. This means that MySQL reads a row from

the first table, and then finds a matching row in the second table, the third

table, and so on. When all tables are processed, MySQL outputs the selected

columns and backtracks through the table list until a table is found for which

there are more matching rows. The next row is read from this table and the

process continues with the next table.

As MySQL scans each table row,

values from the preceding tables are used to find rows in the current table. In

a three table query like the one above, values from table A are used to find

rows in table B, then values from table B are used to find values in table

C. Applying that to our query, MySQL would read the authors table, then the

titleauthor, then the titles. Once one full sweep is finished (found rows in table

A, table B, table C), MySQL does not go back to table A, but to table B to see

if there are any more rows that match the current value from table A. If there

are, it gets that row and then finds matching rows in table C again.

A

join can quickly become unwieldy when dealing with as few as three tables, as evidenced by the following numbers reported by Mike Papageorge

in his www.fiftyfoureleven.com blog.

SELECT tableA.*,

locations.location

FROM tableA

Left Join tableA2locations on

tableA2locations.tableA_id = tableA.id

Left Join locations on

tableA2locations.location_id = locations.id

WHERE locations.location = 'sometown’;

Say that tableA in the above statement contains 40,000 rows that must

be scanned before sweeping through the other tables. If there are 2000 rows retrieved

from tableA2locations, that amounts to 800,000 rows of data. Adding

additional tables in the join could produce result sets in the millions!

Having said that, keep in mind that this estimate leans more towards the

worst case end of the scale, since other WHERE conditions will almost always

dramatically reduce the real number of rows the query produces.

Now that we’ve established

what can happen with haphazardly organized table joins, let’s review some strategies

for improving query execution speed.

Select from the most limiting table first

The effect of selecting from

a table whose result set is limited due to WHERE constraint is to minimize the

number of rows that the database engine has to iterate through. This Works

because the first table is the one which usually requires the most scanning.

Ironically, most queries are written to select from the main table first and then

join the lookups to it. We now know that this may not be the most efficient.

Use STRAIGHT_JOIN and

LEFT JOIN to Specify Table Order

The table read

order forced by STRAIGHT_JOIN or LEFT JOIN helps the join

optimizer do its work much more quickly, because there are fewer table

permutations to check. In case you’re unfamiliar with STRAIGHT_JOIN, it’s similar to JOIN, except that the left table is always read before the right

table, making it perfect for those (few) cases for which the join optimizer

puts the tables in the wrong order.

For other join

types, the MySQL’s join optimizer calculates the order in which tables should

be joined. Note that this means that if you do a query like the following one,

MySQL does a full scan on b because the LEFT JOIN forces it to be

read before d:

SELECT *

FROM a JOIN b LEFT JOIN c ON (c.key=a.key)

LEFT JOIN d ON (d.key=a.key)

WHERE b.key=d.key;

The fix in this case is reversing

the order in which a and b are listed in the FROM clause.

SELECT *

FROM b JOIN a LEFT JOIN c ON (c.key=a.key)

LEFT JOIN d ON (d.key=a.key)

WHERE b.key=d.key;

Shortcut Joins

A shortcut join is a join that

provides an alternative path between two tables. Shortcut

joins improve the performance of a query by not

taking into account intermediate tables, thus shortening a normally longer join

path.

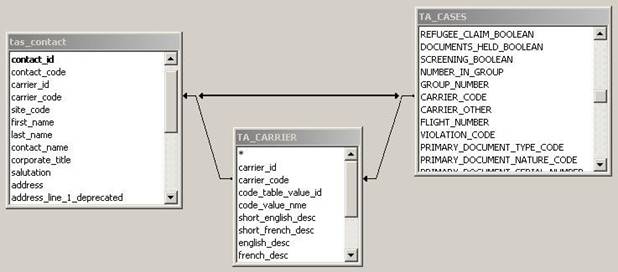

In the following example the

column carrier_code column appears in both the tables tas_contact

and TA_CASES. The carrier_code points to the same data for both

tables. The normal path for a query using carrier_code from tas_contact

and TA_CASES, is to pass through the intermediary table TA_CARRIER.

The shortcut

join directly linking tas_contact and TA_CASES allows the query

to ignore the intermediary table TA_CARRIER, optimizing the query.

The EXPLAIN Analysis Tool

You can get a good indication of how good a join is by taking the product of

the values in the rows column of the EXPLAIN output. This should tell you

roughly how many rows MySQL must examine to execute the query.

explain

select a.au_lname,

a.au_fname,

ta.royaltyper,

t.title,

t.royalty

from authors a,

titleauthor ta,

titles t

where a.au_id = ta.au_id

and ta.title_id = t.title_id

|

id |

select_type |

table |

type |

possible_keys |

key |

key_len |

ref |

rows |

Extra |

|

1 |

SIMPLE |

ta |

ALL |

(NULL) |

(NULL) |

(NULL) |

(NULL) |

800 |

|

|

1 |

SIMPLE |

t |

ref |

NewIndex |

NewIndex |

23 |

crosstab_article.ta.title_id |

100 |

Using |

|

1 |

SIMPLE |

a |

ALL |

(NULL) |

(NULL) |

(NULL) |

(NULL) |

1000 |

Using |

The fewer rows appear there,

the faster the query should run.

Optimizing IN/ANY Subqueries

A very useful

optimization is to “inform” the subquery that the only rows of interest are

those where the inner expression inner_expr is equal to outer_expr.

This is done by pushing down an appropriate equality into the subquery’s WHERE

clause. That is, the comparison is converted to this:

EXISTS (SELECT 1 FROM ... WHERE subquery_where AND outer_expr=inner_expr)

After the conversion, MySQL

can use the pushed-down equality to limit the number of rows that it must

examine when evaluating the subquery.

Conclusion

Although Join optimization

should never be used as a substitute for well-thought out column indexing, it

can greatly improve the performance of your queries in many instances. In a

future article, we’ll look more closely at EXPLAIN as well as other analytical tools

to help minimize the number of rows returned by each step of a query. In doing

so, it is possible to lower result set size from tens of millions of rows to

several hundred! The effect of which is impressive gains in query execution

time.