by Robert D. Schneider

Introduction

The infrastructure that bolsters today’s IT environments must contend with more complexity than ever before, such as mixed workloads and virtualization, along with the unique benefits and demands presented by parallelized hardware.

As usual, the burden of delivering a well-performing, scalable data processing environment falls on IT administrators and architects. In this article, I explain how Sybase Adaptive Server Enterprise (ASE) has been augmented with a series of capabilities designed to deliver better performance and scalability through its hybrid-threaded kernel. I begin by briefly enumerating some of the root causes of the performance and scalability challenges encountered by modern applications. I also point out that since they form the underpinnings of many aspects of an enterprise’s IT infrastructure, relational databases bear the brunt of these challenges. Finally, I describe the workings of ASE’s hybrid-threaded kernel, along with several real-world performance proof points.

This article is meant for IT leadership, database architects, system administrators, and anyone else responsible for providing scalable, responsive infrastructure.

Modern Performance and Scalability Demands

Designing, implementing, and supporting a well-running data center has never been easy. However, this job has become even more complicated in the past several years as a collection of disparate, but inter-related occurrences impact the IT organization. Each of the trends that I list in this section has gained momentum largely in response to business requirements such as cost control, streamlined administration, and globalized, 24 x 7 operations. Let’s look at some of the most prominent factors:

· Parallel hardware. The physics behind ever-increasing processor clock speeds have reached their limits, resulting in a desperate need to discover new ways to drive improved performance. In response, parallel processing is at the heart of today’s processor and system architectures. Servers now routinely incorporate many key prerequisites for parallel processing, such as additional processors, multiple cores on a single socket, and extra hardware threads per core.

· Virtualization. In an effort to squeeze more work from underutilized servers, IT has turned to virtualization. Along with the upsides of virtualization are also some significant challenges. In particular, there is far less control and predictability over the underlying physical server’s state at any one time.

· Mixed workloads. On top of the changes I just listed, hardware platforms are also increasingly asked to concurrently perform traditional transactional processing along with business intelligence-style chores. Moreover, the exact blend of these responsibilities is extremely variable, which further impedes planning.

In response to these and further improving the resource efficiency and TCO benefits of ASE, Sybase architects created a new kernel architecture model that provides administrators with more options to deliver a scalable, high-performance environment.

Sybase ASE’s Hybrid-threaded Kernel

Before I describe the capabilities and benefits of the new threaded kernel, let’s quickly review some fundamental kernel concepts:

1. Each connection to the database is called a task.

2. Each task is given a server process ID (SPID) or kernel process ID (KPID).

3. Each SPID has one KPID that identifies the memory location for the process.

4. Tasks are internal to ASE; the operating system doesn’t see them.

5. Tasks run on engines, which are analogous to CPUs.

6. A scheduler within ASE selects the task to be run by the engine.

7. Engines communicate with each other via shared memory.

Threaded Kernel Architecture

ASE 15.7 introduces a new threaded kernel that builds on the best aspects of Sybase’s well-proven architecture. In the threaded kernel, each engine is a thread of a single process, rather than requiring its own, separate operating system process. This means that database engines are freed from the responsibility of performing I/O, network, and other potentially time-consuming activities. Instead, engines can focus on running compute-intensive user tasks. As I’ll soon describe, there are additional specialized threads for handling I/O, replication, and (CIS) chores. This division of labor delivers noticeably better performance and throughput.

The new kernel is organized around a collection of thread pools. All threads live within a thread pool, and can only see other threads in the same pool. All work is done by tasks that are assigned to a thread pool, and only threads in the same pool may schedule tasks. There are three system-supplied thread pools:

- Default pool. Engines reside here, along with all tasks related to SPID and KPID. Administrators size this pool to enable multiple engines.

- System pool. This pool contains I/O threads, the signal thread, and several low-level CPU threads. Administrators don’t have significant control over the size of this pool other than threads for servicing disk and network I/O.

- Blocking pool. This pool contains threads that make long-latency calls on behalf of database tasks. If blocking needs to occur, it can take place in these threads instead of the blocking the engines themselves. This is only used internally with the threaded kernel and does not require DBA attention.

ASE’s threaded kernel is designed for compatibility: you can easily adopt it without any changes to applications, and with minimal changes to configuration settings. For example, the SAP Business Suite development team implemented the new kernel during the middle of their software development process. No changes in application layer were necessary to employ the new kernel.

Threaded Kernel Performance Benchmarks

Administrators are free to choose the threaded kernel in response to site-specific demands. The threaded kernel is ideal for:

- Mixed CPU and I/O bound workloads

- Bursts of connections

- Replication Agent and CIS (proxy table) users

- Write-intensive workloads

While each enterprise sports a unique information-processing environment, let’s examine three distinct benchmarks that demonstrate the performance advances presented by the threaded kernel.

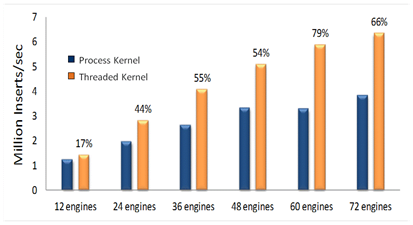

In the write-intensive first example – shown in figure 1 – the threaded kernel is able to deliver consistently better scalability, even as the number of engines increases.

Figure 1: Inserting massive numbers of records

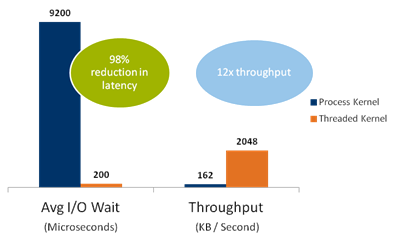

The threaded kernel is notably superior in the second example, which examines responsiveness and throughput for the Replication Agent. As is demonstrated here, Replication Agent’s are limited to the engines on which they can run because of stateful Open Client connection required to Replication Server. The new threaded kernel removes this restriction allowing significantly more opportunity to push more data through the Rep Agent at significantly low latencies.

Figure 2: Replication Agent performance

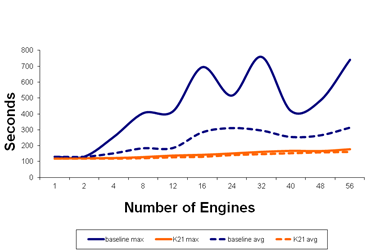

Finally, the third benchmark shows how consistently the threaded kernel (represented by the orange line) performs when faced with highly variable workloads.

Figure 3: Consistent results with the threaded kernel

Conclusion

Driven by unstoppable business and technological realities, virtualization, mixed workloads, and parallel hardware will continue to be a fact of life for IT. This means that core infrastructure must be prepared to cope with these demands.

The threaded kernel meets its goal of more consistent and predictable performance via:

- Streamlined I/O handling

- Reduction in “wasted” CPU cycles and improved efficiency

- Improved load balancing for CIS and Replication Agent work

- Less interference between CPU and I/O bound work

Sybase ASE’s threaded-kernel architecture gives administrators the option of selecting the right approach for their individual needs without needing to make any software or configuration changes to realize the benefits of this approach.

About the Author

Robert D. Schneider is a Silicon Valley-based technology consultant and author. He has provided database optimization, distributed computing, and other technical expertise to a wide variety of enterprises in the financial, technology, and government sectors.

He has written 6 books and numerous articles on database technology and other complex topics such as cloud computing, Big Data, data analytics, and Service Oriented Architecture (SOA). He is a frequent organizer and presenter at technology industry events, worldwide. Robert blogs at rdschneider.com.