Oracle has given us a new parameter STATISTICS_LEVEL for

collecting database statistics. Lets take a deeper look at what you can expect

from your database system when this parameter is set and statistics are being

gathered.

It’s Required

No, turning on statistics is not required. Although, ever

since I have been working with Oracle databases, I have on only one occasion

remembered when Oracle has not recommended turning on at least timed_statistics.

If you recall it was back in the version 8.1.6 days when there was a problem

and everyone scrambled to get on the “stable” 8.1.7 version. Today, the only

parameter that I have found, related to statistical collections, that Oracle

does not recommend keeping continually turned on is the timed_os_statistics.

The Legend

There is a lot of stigmatism and folklore going around about

turning on statistical collections. When doing a quick look through

documentation, websites, newsgroups, and asking my local Oracle professionals,

I came up with the following responses to statistical collection.

- You must set

TIMED_STATISTICS to TRUE in the parameter file.

- I recommend

using timed statistics.

- Doing this

will have a small negative effect on system performance.

- You will incur

minimal resource overhead

- You cannot

afford to be without the information that timed statistics provides.

I don’t know about you but these tend to leave me very

uneasy and stressed when reading these comments. They range of feelings I have

are from ‘YOU MUST’ to ‘YOU SHOULD NOT’. Personally, I tend to get very uneasy

when I am told what to do and what not to do, especially when there aren’t any

benchmarks to support the statements.

Typical Responses

Not only does Oracle themselves not have any benchmarks on

what system overhead is incurred when collecting statistics, at least that they

want to share, but I have been unable to find any in the Oracle community.

Here are the typical responses I have gotten when asked about benchmarks.

- Sorry, Oracle

Support does not have any specific benchmark information.

- Contact

___________, they may have benchmark information.

- Can anyone

else share their input and experiences.

- My Technical

Consultant has not seen any specific benchmarks on this.

- Take a look at

the guide and if necessary we will try to get some development resources

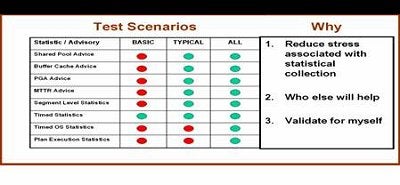

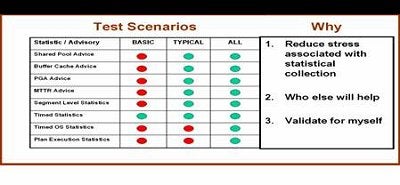

Test Scenarios

In order to reduce the stress I have generated and validate

for myself the system impact of statistical collection, I set out to run three

simple tests and compare my results. As you recall, the parameter

STATISTICS_LEVEL has three settings: BASIC, TYPICAL, and ALL. What I wanted to

do was set my STATISTICS_LEVEL to each of these three settings and run the same

workload through. As you might also remember, setting STATISTICS_LEVEL to BASIC

does no collection so I had to hardwire timed_statistics equal to TRUE in my

SPFILE. In figure 1, you can see what statistics and advisories where turned on

during each of the three test scenarios. My system simulated an OLTP

environment. In addition, I did not want contention to play a big impact so I

reduced the transactions to a point where I knew that they would have plenty of

resources available to them. This was done so that I could safely say that a

reduction of workload and throughput was not caused by contention, but in fact,

was caused by the statistic levels I was testing for.

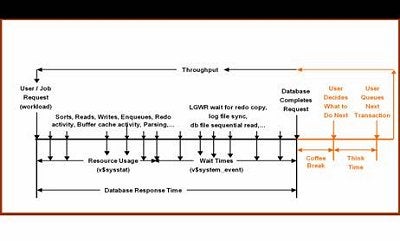

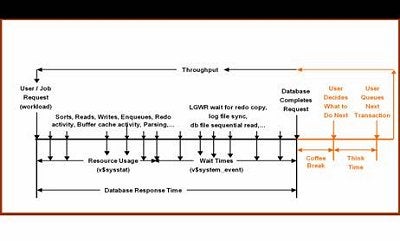

A Little Background

Let’s get a quick level set on what it is I really was

concerned with monitoring and comparing, with the three test scenarios. In

figure 2, you will see a typical, yet basic database system. At the front of

the time slice, users or jobs request work from the database system. This is

called the workload. As the workload goes through the system it incurs database

resources (v$sysstat) and wait times (v$system_event). The accumulation of

resource usage and wait times in the database correlates to database response

time. Other factors that contribute to the total throughput of user requests are

basically just “other” things that humans do during a normal workday. These

range from getting coffee to figuring out what the next transaction looks like.