About the Series …

This is the sixteenth

article of the series, Introduction to MSSQL Server 2000 Analysis

Services. As I stated in the first article, Creating Our First Cube, the primary focus of this series is an introduction

to the practical creation and manipulation of multidimensional OLAP cubes. The

series is designed to provide hands-on application of the fundamentals of MS

SQL Server 2000 Analysis Services ("MSAS"), with each

installment progressively adding features and techniques designed to meet

specific real-world needs. For more information on the series, as well as the hardware

/ software requirements to prepare for the exercises we will undertake,

please see my initial article, Creating Our

First Cube.

Note: Service Pack 3 updates are assumed for MSSQL

Server 2000, MSSQL Server 2000 Analysis Services, and the related Books

Online and Samples.

Introduction

We

learned in our last lesson, MSAS

Administration and Optimization: Simple Cube Usage Analysis,

that Microsoft SQL Server 2000 Analysis Services ("MSAS") provides

the Usage Analysis Wizard to assist us in the maintenance and

optimization of our cubes. We noted that the Usage Analysis Wizard

allows us to rapidly produce simple, on-screen reports that provide information

surrounding a cube’s query patterns, and that the cube activity metrics

generated by the wizard have a host of other potential uses, as well, such as

the provision of a "quick and dirty" means of trending cube

processing performance over time after the cube has entered a production status.

As I

stated in Lesson 15,

however, I often receive requests from clients and readers, asking how they can approach the

creation of more sophisticated reporting to assist in their usage analysis

pursuits. This is sometimes based upon a need to create a report similar to

the pre-defined, on-screen reports, but in a way that allows for printing,

publishing to the web, or otherwise delivering report results to information

consumers. Moreover, some users simply want to be able to design different

reports that they can tailor themselves, to meet specific needs not addressed

by the Usage Analysis Wizard’s relatively simple offerings. Yet others

want a combination of these capabilities, and / or simply do not like the

rather basic user interface that the wizard presents, as it is relatively

awkward, does not scale and so forth.

Each of these more

sophisticated analysis and reporting needs can be met in numerous ways. In this lesson, we will we will

examine the source of cube performance statistics, the Query Log,

discussing its location and physical structure, how it is populated, and other

characteristics. Next, we will discuss ways that we can customize the degree and

magnitude of statistical capture in the Query Log to enhance its value

with regard to meeting more precisely our local analysis and reporting needs; we

will practice the process of making the necessary changes in settings to

illustrate how this is done. Finally, we will discuss options for generating

more in-depth, custom reports than the wizard provides, exposing ways that we

can directly obtain detailed information surrounding cube processing

events in a manner that allows more sophisticated selection, filtering and

display, as well as more customized reporting of these important metrics.

At the Heart of Usage Analysis for the Analysis Services

Cube: The Query Log

Along with an

installation of MSSQL Server 2000 Analysis Services comes the installation of

two independent MS Access databases, msmdqlog.mdb, and msmdrep.mdb.

By default, the location in which these databases are installed is [Installation Drive]:\Program Files\Microsoft

Analysis Services\Bin.

The msmdrep.mdb database houses the repository, and will not be the

focus of this lesson. We will be concentrating upon the msmdqlog.mdb database,

the home of the Query Log where the source information for our usage

analysis and reporting is stored.

Structure

and Operation of the Query Log

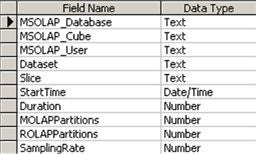

A study

of msmdqlog.mdb reveals that it consists of a single table, named, aptly

enough, QueryLog. Illustration 1 depicts the table within the

database, design view, so that we can see it’s layout for purposes of

discussion.

Illustration 1: The

Query Log Table, Design View, within Msmdqlog.mdb

NOTE: Making a copy of msmdqlog.mdb

before undertaking the steps of this lesson, including entering the database

simply to view it, is highly recommended to avoid issues with an operating MSAS

environment, damaging a production log, etc.

The Usage-Based

Optimization Wizard and Usage Analysis Wizard (see Lesson 15 for a discussion) rely on the Query Log,

as we learned in our last session. As we can see, the log is composed of

several relatively straightforward fields. The fields, together with their

respective descriptions, are summarized in Table 1.

|

Field |

Description |

|

MSOLAP_Database |

The |

|

MSOLAP_Cube |

The |

|

MSOLAP_User |

The name |

|

Dataset |

A |

|

Slice |

A |

|

StartTime |

The |

|

Duration |

The |

|

MOLAPPartitions |

The |

|

ROLAPPartitions |

The |

|

SamplingRate |

The |

Table 1: The Fields of

the Query Log

In lockstep with a

review of the fields from a description perspective, we can view sample data in

Illustration 2, which depicts the same table in data view.

Illustration 2: The

Query Log Table, Data View, within Msmdqlog.mdb

Although

each of the fields has a great deal of potential, with regard to analysis and

reporting utility (the first three could be seen as dimensions), the fourth, Dataset,

can be highly useful with regard to the information that it reveals about cube

usage. The cryptic records within this column represent the associated

levels accessed for each dimensional hierarchy within the query. An

example of the Dataset field ("121411") appears in the

sample row depicted in Illustration 3.

![]()

Illustration 3: Example

of the Dataset Field

While we

won’t go into a detailed explanation in this lesson, I expect to publish an

article in the near future that outlines the interpretation of the digits in

the Dataset field. We will trace an example Dataset field’s

component digits to their corresponding components in the respective cube

structure, along with more information regarding report writing based upon the Query

Log in general. Our purpose here is more to expose general options for

using the Query Log directly to generate customized usage analysis

reports.

Additional

fields provide rather obvious utility in analyzing cube usage, together with

performance in general. The Slice field presents information, in

combination with Dataset, which helps us to report precisely on the

exact points at which queries interact with the cube. These combinations can

provide excellent access and "audit" data. To some extent, they can

confirm the validity of cube design if, say, a developer wants to verify which

requests, collected during the business requirements phase of cube design, are

actually valid, and which, by contrast, might be considered for removal from

the cube structure based upon disuse, should the time arrive that we wish to

optimize cube size and performance by jettisoning little-used data.

StartTime and Duration provide the ingredients for evolved

performance trending, and act as useful statistics upon which to base numerous

types of administrative reports, including information that will help us to

plan for heavy reporting demands and other cyclical considerations. MOLAPPartitions

and ROLAPPartitions,

which provide counts on the different multidimensional OLAP or relational OLAP partitions,

respectively, that were used to retrieve the specified query results, can also

offer great advanced reporting options, particularly in the analysis /

monitoring of partitioning scheme efficiency, and the related planning for

adjustments and so forth.

Finally, SamplingRate displays the setting in effect for

automatic logging of queries performed by MSAS. This appears in Illustration

2 at its default of 10. The setting can be changed, however, as we shall

see in the next section.