Introduction: Transparent Data Encryption

SQL Server’s EncryptBy* and DecryptBy* address encryption of

a subset of data in a database. This was introduced in SQL Server 2005. The big

news in SQL Server 2008 is Transparent Data Encryption.

On the surface, Transparent Data Encryption seems to be a security “silver

bullet”. The most important feature (besides securing the data) is that it does

not require any changes to your application code. Data is encrypted “at

rest” which means that “data

is encrypted before it is written to disk; data is decrypted when it is

read from disk.”

As is true of any security-related feature, Transparent Data

Encryption is meant to be just a part of a defense-in-depth strategy.

Caveats

Transparent Data Encryption has an above-average list of

caveats.

-

TDE is a SQL Server 2008 Enterprise-only feature. (Of course, it

works on Developer-Edition also.) -

Microsoft says the performance hit is “largely dictated by the

application workload, in some of the testing we conducted, the overhead was

measured to be less than 5%.” (ref) -

When you turn on TDE for one database in an instance, tempdb is

encrypted for all databases on that instance. Microsoft says, “This might have

a performance effect for unencrypted databases on the same instance of SQL

Server.” (ref) -

“Encrypted data compresses significantly less than equivalent

unencrypted data. If TDE is used to encrypt a database, backup compression will

not be able to significantly compress the backup storage. Therefore, using TDE

and backup compression together is not recommended.” (ref) -

“While a re-encryption scan for a database encryption operation

is in progress, maintenance operations to the database are disabled.” (ref)

Performance Testing

The focus here is to test the performance of SQL Server

2008’s Transparent Data Encryption. How does it perform compared to a database

without encryption? (For information on how EncryptByKey/DecryptByKey affects

performance, see SqlCredit

Part 8: Comparing Encrypt/DecryptByCert and Encrypt/DecryptByKey.) How does

the mix of SELECT/INSERT/UPDATE affect the performance results? We’ll see how

these results compare to Microsoft’s published expectations and also include

the full code so you can rerun the tests in your environment.

If you have read some of my recent performance test

articles, much of the details below will be familiar.

Test System Details

For this test, I used this system:

4 64-bit Dual-Core AMD Processors

16 GB RAM

Direct-attached SCSI drives

Windows Server 2003 R2 Enterprise

x64 Edition

SQL Server 2008 (RTM)

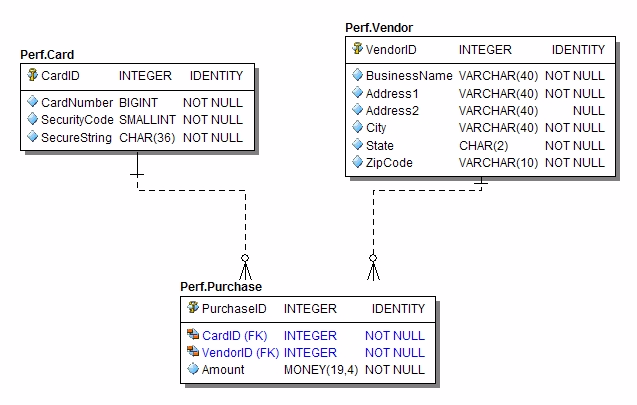

Test Schema

Each table has a clustered primary key on the ID column.

Non-Clustered Indexes:

- Vendor: non-unique index on BusinessName

- Card: unique index on CardNumber, SecurityCode

- Card: non-unique index on SecurityCode, CardNumber

- Card: non-unique index on SecureString

- Purchase: non-unique index on CardID

- Purchase: non-unique index on VendorID

Test Details

The test scripts are built to mimic an OLTP workload. There

are three tables involved: Vendor, Card, and Purchase.

The “Executive Summary”

The tests use multiple simultaneous calls to a SQL script that

calls stored procedures in a loop to first load and then read, insert, or

update data.

The Gory Details

The “driver” for this test is a simple framework I built

years ago called Hummer. It uses a .bat file to run some initial setup scripts

then starts n simultaneous processes, each running the same script. The

idea is to simulate multiple clients fighting for database resources. Each

script includes an occasional call to DELAY. This helps to allow the multiple

processes to share the database resources. It also better simulates a real OLTP

workload.

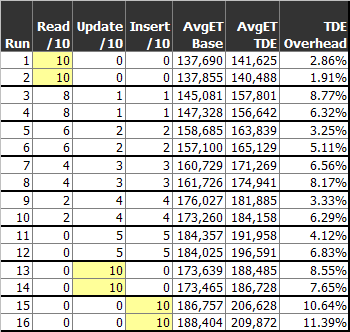

There were multiple tests with different parameters. You can

see all the details in the chart below. Let’s look at Test Run 7 as an

example.

The .bat script performs these steps:

- Drop and recreate the database.

- Create the tables and indexes.

- Create the stored procedures.

- Start 10 processes, each running the main test script.

The CREATE DATABASE script creates the Data file with an

initial size of 100 MB and the Log with an initial size of 20 MB, each set to grow

10%.

The main test script performs these steps:

- Execute DBCC FREESYSTEMCACHE.

- Execute DBCC DROPCLEANBUFFERS.

- Delay 5 seconds on start-up (to allow all processes to start).

- Loop 100,000 times

-

Read control parameters (SP call). If finished with test, stop.

(This also allows you to change the parameters or stop the test while it’s

running.) - Every 1000 loops, delay 1 second.

- For the first 10,000 loops, create a Vendor record (SP call).

- For the first 10,000 loops, create a Card record (SP call).

- For the first 10,000 loops, create a Purchase record (SP call).

- Of every 10 loops:

- 4 of 10 times: read a Card record.

- 3 of 10 times: update a Vendor record.

- 3 of 10 times: insert a Purchase record.

- End loop

(After the first 10,000 loops, all

read and update activity is on some random record in the set.)

This process is run for both the Base (unencrypted) and TDE

databases.

It is important to understand that we’re inserting 10,000

Vendor, Card, and Purchase records per process. So, the total number of

records inserted (in the initial set) is 100,000 records per table.

The elapsed time is for the looping work only, not the

database creation or any of the other setup work.

Results

Parameters consistent in all tests:

- LoopMax: 100,000

- Process Count: 10

- Records Created: 10,000 per process per table

- “AvgET” times are in milliseconds

The average over all sixteen tests was 6.36%. There

is clearly some variation among the tests, but I believe this gives a good

indication of the overhead of using TDE for a transactional workload.

Remember that this is running the exact same workload on the

exact same hardware.

CRUD Patterns?

I don’t see a strong pattern showing that, for instance,

reads are cheaper than writes. There is certainly an indication that

reads are cheaper than writes, but it’s not a consistent pattern.

CPU

How does TDE affect CPU usage? This is a tough one to answer

definitively. What seems appropriate is to show representative CPU graphs from

the various runs. You can draw your own conclusions from the graphs. My

opinion? There is no clear difference. TDE does not add a noticeable amount of

CPU overhead.

Note that, for consistency, I captured these images at the

same point in each test (right after the 70,000th loop completed).

Full-Read Test (10/0/0) – Base

Full-Read Test (10/0/0) – TDE

Full-Write Test (0/5/5) – Base

Full-Write Test (0/5/5) – TDE

Conclusion

The average elapsed-time overhead was 6.36%. This

confirms that “in some of the testing …, the overhead was measured to be less

than 5%.” Given the benefit of having “at rest” data encrypted, this seems like

a very reasonable cost.

Having run a number of similar tests on new features in SQL

Server, I am amazed that TDE has such a minimal effect on the time to run a

workload, and it has no obvious affect on CPU utilization. In my mind, this is

a clear win. TDE adds very little cost to your processing, it costs nothing

(assuming you’ve already paid for Enterprise Edition), and you don’t have to

change your application at all. Bravo to the development team for

delivering such a strong new feature.

Thoughts? Drop a note in the forum or

leave a comment.

All of the code is included, so feel free to modify it and

rerun the tests to suit your specific needs. If you do rerun the tests, please

share the results.

Reference

-

Understanding

Transparent Data Encryption (TDE) from Books Online -

Database

Encryption in SQL Server 2008 Enterprise Edition from MSDN -

SQL

Server 2008: Transparent data encryption feature – a quick overview from Laurentiu

Cristofor’s blog -

SQL

Server 2008 – Transparent Data Encryption from Andrew Fryer’s blog -

SQL

Server 2008 Transparent Data Encryption (TDE) from Jose Barreto’s Blog -

SQL

Server 2008 Transparent Data Encryption from Brad M McGehee’s blog

Download the files for this article.