If you haven’t read the first CDC article, consider reading that now before reading this one. It will help lay the groundwork for what is covered here.

Introduction: CDC Performance Tests

The purpose here is to test the performance of SQL Server 2008’s Change Data Capture functionality. How does it perform compared to a system with no change-tracking functionality?

If you have read some of my recent performance test articles, much of the details below will be familiar. This test used a different system and SQL Server 2008 only, so read the “Test System Details” section, and then skip down to the results. The test framework also is updated to use a “Common” database that stores the run parameters and test results.

It is important to note here that the tests are performing no SELECTs. This is because what we care about here is tracking the cost of change data capture.

Test System Details

For this test, I used this setup:

4 64-bit Dual-Core AMD Processors

64 GB RAM

Direct-attached SCSI drives

Windows Server 2003 R2 Enterprise x64 Edition

SQL Server 2008 (RTM)

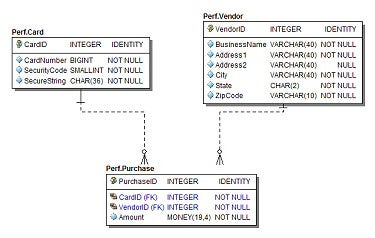

Test Schema

Each table has a clustered primary key on the ID column.

Non-Clustered Indexes:

- Vendor: non-unique index on BusinessName

- Card: unique index on CardNumber, SecurityCode

- Card: non-unique index on SecurityCode, CardNumber

- Card: non-unique index on SecureString

- Purchase: non-unique index on CardID

- Purchase: non-unique index on VendorID

Test Details

The test scripts are built to mimic an OLTP workload. There are three tables involved: Vendor, Card, and Purchase.

The “Executive Summary”

The tests use multiple simultaneous calls to a SQL script that calls stored procedures in a loop to first load and then insert and/or update data.

The Gory Details

The “driver” for this test is a simple framework I built years ago called Hummer. It uses a .bat file to run some initial setup scripts then starts n simultaneous processes, each running the same script. The idea is to simulate multiple clients fighting for database resources. Each script includes an occasional call to DELAY. This helps to allow the multiple processes to share the database resources. It also better simulates a real OLTP workload.

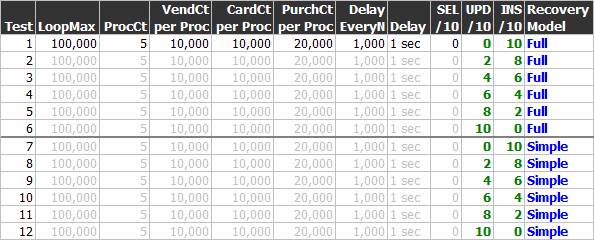

There were multiple tests with different parameters. You can see all the details in the chart below. Let’s look at Test Run 2 as an example.

The .bat script performs these steps:

- Drop and recreate the database.

- Create the tables and indexes.

- Create the stored procedures.

- Start 5 processes, each running the main test script.

The CREATE DATABASE script creates the Data file with an initial size of 400 MB and the Log with an initial size of 20 MB, each set to grow 10%. After Test Run 2, here are the Data and Log sizes:

- Basic Data: 400

- Basic Log: 20

- CDC Data: 400

- CDC Log: 624

The main test script performs these steps:

- Execute DBCC FREESYSTEMCACHE (‘ALL’).

- Execute DBCC DROPCLEANBUFFERS.

- Delay 5 seconds on start-up (to allow all processes to start).

- Loop 100,000 times

- Read control parameters (SP call). If finished with test, stop. (This also allows you to change the parameters or stop the test while it’s running.)

- Every 1000 loops, delay 1 second.

- For the first 10,000 loops, create a Vendor record (SP call).

- For the first 10,000 loops, create a Card record (SP call).

- For the first 20,000 loops, create a Purchase record (SP call).

- Of every 10 loops:

- 0 of 10 times: read a Card record.

- 2 of 10 times: update a Vendor record (see the “UPD/10” column).

- 8 of 10 times: insert a Purchase record (see the “INS/10” column).

(After the first 20,000 loops, all insert or update activity is on some random record in the set.)

- End loop

It is important to understand that we’re inserting 10,000 Vendor records, 10,000 Card records, and 20,000 Purchase records per process. So, the total number of records inserted (in the initial set) is 50,000, 50,000, and 100,000.

The elapsed time is for the looping work only, not the database creation or any of the other setup work.

Test Parameters

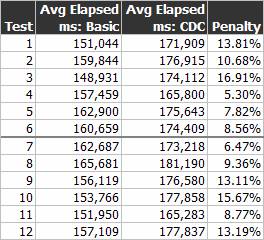

Results

The attached results files include sample output files. Remember that this is running the exact same workload on the exact same hardware.

Conclusion

The expected pattern is that using CDC is costly. That is not surprising at all when you understand that it is recording the CDC changes in the same database as the base changes. The average penalty for CDC with full recovery is 10.51%. The average penalty for CDC with simple recovery is 11.10%.

I expected to see a pattern showing that UPDATEs were more expensive. This did not turn out to be true in these tests.

As a side note, it is very interesting to see the performance results comparing full recovery to simple recovery. I expected to see a fairly significant difference in elapsed times when the databases were switched to simple recovery, but that did not turn out to be true. The average time actually went up for the simple mode tests (by 0.69 to 1.27%).

It is clear from the results that there is some variability in the numbers. It would be nice to see a perfect increase or decrease, but there are just too many variables involved regardless of how strictly you control the test environment. What you have to look at is patterns, and it’s clear here that adding CDC to this workload caused an increase of roughly 10%.

All of the code is included, so feel free to modify it and rerun the tests to suit your specific needs.

Thoughts or comments? Drop a note in the forum.

Reference

- SQL Server 2008’s Change Data Capture – Tracking the Moving Parts from Database Journal

- Microsoft SQL Server 2008 – Change Data Capture – Part I from Database Journal

- Microsoft SQL Server 2008 – Change Data Capture – Part 2 from Database Journal

- Microsoft SQL Server 2008 – Change Data Capture – Part 3 from Database Journal

- Microsoft SQL Server 2008 – Change Data Capture – Part 4 from Database Journal

- Overview of Change Data Capture from SQL Server Books Online

- Change Data Capture from SQL Server Books Online

- Tuning the Performance of Change Data Capture in SQL Server 2008 from Microsoft TechNet

Download the files for this article.