In my article

last month, I started a new series to discuss T-SQL best practices. This

is the second article in that series. This series of best practices will help

you write T-SQL code that performs better, and promote coding practices to help

minimize the number of application failures. This month I’m going to focus on

how to optimize writing a jack-of- all-trades stored procedure (SP). I’m

going to discuss what kind of SP this is and how you can optimize this kind of

SP by following a specific coding style.

Jack-Of-All-Trades Stored Procedure

Before I get into how to optimize a jack-of-all-trades SP, let’s

expand a little bit on what kind of stored procedure I’m talking about. The

jack-of-all-trades SP is a procedure that accepts a number of different

parameters. This procedure accepts none, one, many, or all of the parameters

associated with the jack-of-all-trades SP. Based the parameters passed, the

jack-of-all-trade SP determines what record set is to be brought back. Here is

a typical example of a jack-of-all-trades SP:

CREATE PROCEDURE JackOfAllTrades (@SalesOrderID int = NULL

,@SalesOrderDetailID int = NULL

,@CarrierTrackingNumber nvarchar(25) = NULL)

AS

SELECT * FROM AdventureWorks.Sales.SalesOrderDetail

WHERE

(SalesOrderID = @SalesOrderID or @SalesOrderID IS NULL)

AND (SalesOrderDetailID = @SalesOrderDetailID or

@SalesOrderDetailID IS NULL)

AND (CarrierTrackingNumber = @CarrierTrackingNumber or

@CarrierTrackingNumber IS NULL)

GO

Here my JackOfAllTrades SP accepts three different

parameters. All these parameters default to the NULL value. If a value is

passed in then it is used as a parameter in the WHERE clause to constrain

records returned. Each parameter in the SP is used to build a complex WHERE

clause that contains the following logic in the WHERE clause for each parameter

passed:

(<TableColumn> = @PARM or @PARM IS NULL)

This logic says if @PARM is passed a non-null value then

constrain the records returned to make sure that the <TableColumn> is

equal to the value of @PARM. The second part of that condition is “@PARM IS

NULL”. This part says if @PARM was not passed (equal to NULL) then don’t

constrain your data based on this parameter.

Let’s look at a typical execution of my JackOfAllTrades SP.

Suppose I execute my SP with the following command:

EXEC JackOfAllTrades @SalesOrderID = 43659

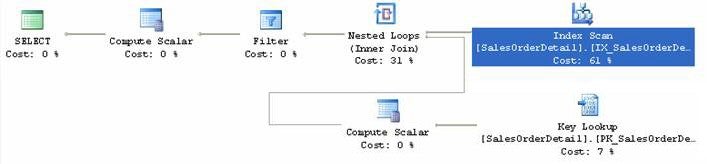

When this command executes, its execution plan looks like

this:

Here you can see that for the single parameter passed, the

optimizer decided it should use an “Index Scan” operation. My SP’s SELECT

statement is constraining on a highly unique column @SalesOrderID that is part

of the clustered index key. So, you would think that SQL Server would be smart

enough to know that an “Index Seek” operation would be quicker for resolving my

jack-of-all-trades SP by going through the clustered index. But as we can see

from the above executing plan that SQL Server isn’t that smart. So why is

that?

When the optimizer sees the “@PARM IS NULL” condition, it

appears like a constant to the SQL Server optimizer. Therefore the optimizer

assumes no index will be useful to resolve the “(<TableColumn> = @PARM1

or @PARM1 IS NULL)” condition due to the constant being in the WHERE

condition. Therefore, SQL Server decides to use an Index Scan operation to

resolve this type of condition. The more parameters you have in our

jack-of-all-trades SP the worse the performance is due to the number of SCAN

operations required for each passed parameter.

Optimizing the Jack-Of-All-Trades SP

You don’t have to settle for a poor performing

jack-of-all-trades SP by continuing to code these types of queries like the one

above. Let’s explore what my JackOfAllTrades SP does and re-write it so SQL

Server’s query optimizer will create a more optimal execution plan.

As state above the real problem with the jack-of-all-trades

SP is the fact that for every parameter you need to have an “OR” condition that

checks to see if the parameter passed IS NULL. If we can eliminate the

requirement for this constant expression than SQL Server will be able to create

a plan that will use an INDEX SEEK operation. So how can we eliminate the @PARM

IS NULL requirement? The answer is by using parameterized dynamic SQL.

Ok before you start shooting me flaming arrows over

suggesting dynamic SQL, hear me out. I know you are already thinking that with

dynamic SQL I might introduce SQL injection into my solution. However, as you

will see I will not be increasing the risk of SQL injection, because I will

just be building dynamic SQL code that passes my SP parameters to another

system SP “sp_executesql” that will be using these parameters within the

dynamic SQL code that I build.

The system SP “sp_executesql” allows you to develop a T-SQL

statement that contains parameters, and allows you to define and pass the

values for those parameters to the dynamic SQL by passing parameters to the“sp_executesql”

SP when executing this SP. A T-SQL statement that is executed this way is

commonly called parameterized SQL. There are a number of different reasons to

use parameterized SQL, but the scope of this discussion is how to use

parameterized SQL to improve the performance of a jack-of-all-trades SP. Here

I have re-written the query above using a dynamically built parameterized T-SQL

SELECT statement:

CREATE PROCEDURE JackOfAllTrades_V2 (@SalesOrderID int = NULL

,@SalesOrderDetailID int = NULL

,@CarrierTrackingNumber nvarchar(25) = NULL)

AS

DECLARE @CMD NVARCHAR(max)

DECLARE @WHERE NVARCHAR(max)

SET @CMD = ‘SELECT * FROM AdventureWorks.Sales.SalesOrderDetail ‘

SET @WHERE = ”

IF @SalesOrderID IS NOT NULL

SET @WHERE = @WHERE + ‘AND SalesOrderID = @SalesOrderID ‘

IF @SalesOrderDetailID IS NOT NULL

SET @WHERE = @WHERE + ‘AND SalesOrderDetailID = @SalesOrderDetailID ‘

IF @CarrierTrackingNumber IS NOT NULL

SET @WHERE = @WHERE + ‘AND CarrierTrackingNumber = @CarrierTrackingNumber ‘

IF LEN(@WHERE) > 0

SET @CMD = @CMD + ‘ WHERE ‘ + RIGHT(@WHERE,LEN(@WHERE) – 3)

EXEC sp_executesql @CMD

, N’@SalesOrderID int

,@SalesOrderDetailID int

,@CarrierTrackingNumber nvarchar(25)’

,@SalesOrderID = @SalesOrderID

,@SalesOrderDetailID = @SalesOrderDetailID

,@CarrierTrackingNumber = @CarrierTrackingNumber

Let me walk you through this code so you understand the

dynamic and parameterized parts of this code. This SP starts out be setting

the variable @CMD to the basic SELECT statement I need to run without the WHERE

statement. I then set the @WHERE variable to an empty string. I then go

through a series of four different IF statements. The first three IF statement

check to see if each of the parameters being passed is NOT NULL. If a

particular parameter is NOT NULL then I append a condition to the @WHERE

variable for that parameter. Since I already checked and determined that the

parameter was NOT NULL, I don’t need to add the IS NULL condition to my WHERE

clause as was done in the original code in my SP. Instead all I need to add it

the <TableColumn> = @PARM condition to the @WHERE variable. The last IF

statement determines if the @WHERE variable has been populated with at least

one condition, and if it has then it appends the @WHERE variable to the @CMD

variable.

Note the @WHERE variable is the dynamic part of this code.

But I don’t place the actual text of the parameter into the @WHERE variable,

but instead I only place a reference to my parameters in the WHERE condition.

Therfore my final dynamic T-SQL statement basically only contains my original

SELECT statement and the WHERE clause no longer needs the IS NULL condition to

constraint the data.

Lastly I use the “sp_executesql” SP to execute my dynamic

parameterized T-SQL statement. To do this I pass 5 parameters to this system

SP. The first one of these parameters is my dynamic T-SQL @CMD variable. The

second parmeter declares all the possible variables I might have in my

parametrized query, and their data types. For the last three parameters that

are passed to my SP I just pass them on as parameters to the system SP just

like they were originally passed on in my first jack-of-all-trades SP. So as

you can see here I haven’t made my dynamic SQL any more vulnerable to SQL

injection then my original SP, because I didn’t use the actual values of my

parameters to populate the @WHERE variable. I am just passing those parameters

on as variables to my parameterized dynamic T-SQL via the “sp_executesql”

system SP.

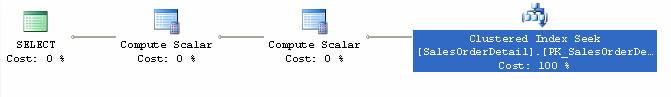

Now let’s run the code for my re-written jack-of-all- trade

SP by running the following statement:

EXEC JackOfAllTrades_V2 @SalesOrderID = 43659

When I run this against the AdventureWorks database on my

server I get the following execution plan:

By comparing this execution plan with the prior one, you can

see it is simpler and it uses a “Cluster Index Seek” operation to resolve this

SP. The SQL Server query engine is able to using this operation because my

dynamic T-SQL no longer has the “@PARM IS NULL” statement in it. Since I have

simplified my T-SQL statement by using dynamic SQL and eliminating the IS NULL

constraint, SQL Server is now able to pick a more optimal plan for my V2

version of my jack-of-all-trades SP.

So how much of an improvement does this actually achieve?

Remember I’m only looking for records from the SalesOrderDetail table that have

a SalesOrderID equal to 43659. My original jack-of-all-trades SP did an INDEX

SCAN operation to resolve the query. This means it had to read all the way

through the index before it could complete my request to return records that

contain a single SalesOrderID value. Whereas the V2 version of my

jack-of-all-trades SP is able to use an INDEX SEEK operation against the

clustered Index key on the SalesOrderDetail table to get directly to the

specific records that contain the SalesOrderId equal to 43659 very quickly. The

INDEX SEEK operation is much more optimized then using the INDEX SCAN

operation, but how much?

Measuring the I/O savings by using the V2 version of the

jack-of-all-trades SP can be accomplished a number ways. Let’s measure the I/O

improvements by running the following T-SQL statements:

SET STATISTICS IO ON

GO

EXEC JackOfAllTrades @SalesOrderID = 43659

GO

EXEC JackOfAllTrades_V2 @SalesOrderID = 43659

GO

Here I have used the “SET STATISTICS IO ON” statement so the

output of running my two SPs will show me the number of I/Os each command

required to resolve it query. Below is the output I get from the above T-SQL

statements:

(12 row(s) affected)

Table ‘SalesOrderDetail’. Scan count 1, logical reads 264, physical reads 0, read-ahead reads 0,

lob logical reads 0, lob physical reads 0, lob read-ahead reads 0.

(1 row(s) affected)

(12 row(s) affected)

Table ‘SalesOrderDetail’. Scan count 1, logical reads 3, physical reads 0, read-ahead reads 0,

lob logical reads 0, lob physical reads 0, lob read-ahead reads 0.

(1 row(s) affected)

By looking at this output, we can see that my original

jack-of-all-trades SP performance is 1 scan and 264 logical reads. Whereas my

V2 version of the jack-of-all-trades SP did the same number of index scans, but

was able to resolve the query by performing only 3 logical reads. This is a

savings of 261 I/O, which in itself might not seem significant. However, what

if you where calling the SP over and over again in a loop of some kind. It doesn’t

take many calls before you will start to notice a significant performance

improvement using the V2 version over the original version of the

jack-of-all-trades SP.

Improving I/O Counts Using Dynamic Parameterized SQL

Knowing why SQL Server looks at your code and picks a

sub-optimal plan is what you need to take away from this article. Here the SQL

Server optimized was taking the “@PARM IS NULL” logic in my code as a

constant. Therefore, it determined it needed to perform an INDEX SCAN

operation to resolve the first version of my jack-of-all-trades SP. As we all

know, SCAN operations are typically slower than SEEK operations. By writing

dynamic T- SQL code in my V2 version of my jack-of-all-trades SP I was able to

eliminate having a constant expression in the WHERE clause of my T-SQL

statement. This allowed the optimizer to pick a better route at resolving my

T-SQL by using a CLUSTERED INDEX SEEK operation. If you have one of these

jack-of-all-trades SP at your site try re-writing it using dynamic parameterize

SQL and see what kind of performance gains you are able to obtain.

»

See All Articles by Columnist Gregory A. Larsen